Publications

Estimation of subject-to-camera distance in facial photographs + free calculator

Facial Comparision

Published on September 16, 2022

Written by Enrique Bermejo

Facial biometrics play an essential role in the fields of law enforcement and forensic sciences. When comparing facial traits for human identification in photographs or videos, the analysis must account for several factors that impair the application of common identification techniques, such as illumination, pose, or expression.

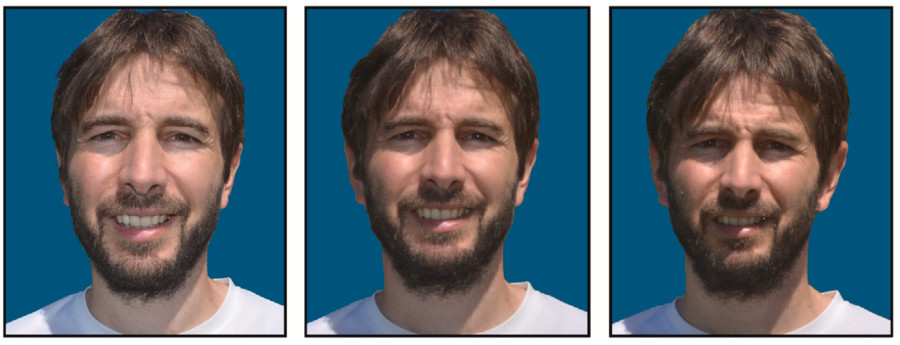

Facial attributes can drastically change depending on the distance between the subject and the camera at the time of the picture. This effect is known as perspective distortion, which can severely affect the outcome of the comparative analysis.

Hence, knowing the subject-to-camera distance of the original scene where the photograph was taken can help determine the degree of distortion, improve the accuracy of computer-aided recognition tools, and increase the reliability of human identification and further analyses.

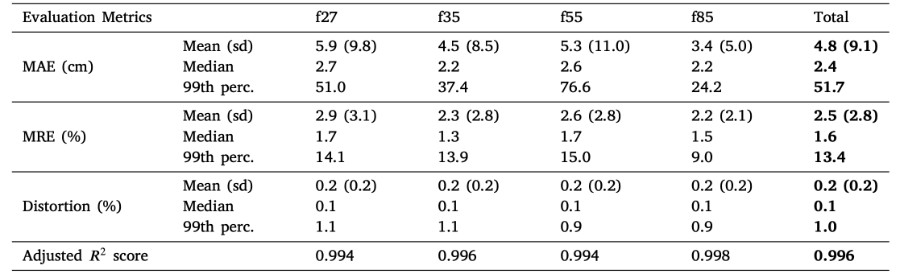

In this article, we propose a deep learning approach to estimate the subject-to-camera distance of facial photographs: FacialSCDnet. Furthermore, we introduce a novel evaluation metric designed to guide the learning process, based on changes in facial distortion at different distances. To validate our proposal, we collected a novel dataset of facial photographs (200.000+ facial photos) taken at several distances using both synthetic and real data. Our approach is fully automatic and can provide a numerical distance estimation for up to six meters, beyond which changes in facial distortion are not significant. The proposed method achieves an accurate estimation, with an average error below 6 cm of subject-to-camera distance (see table below) for facial photographs in any frontal or lateral head pose, robust to facial hair, glasses, and partial occlusion.

deviation, median, and 99th percentile error results are summarized for each metric. Measurement of the coefficient of

determination is also shown.

Download the complete article. The data used is available upon request.